🧭 THIS WEEK AT BuildProven

Howdy, welcome to all the new readers since we changed to BuildProven! Hope you like it, feedback welcome.

Let’s get into how to actually build something!

🧰 Worth Your Click

Here are a few things I found recently:

OpenAI released GPT5-4.

My LLM Coding Workflow Going Into 2026 - The best single article on AI-assisted development workflow. Spec first, small chunks, test everything.

Not All AI-Assisted Programming Is Vibe Coding - The key distinction: if you review, test, and understand the code, it is not vibe coding. It is software development. Important framing.

🗺️ FEATURED INSIGHT

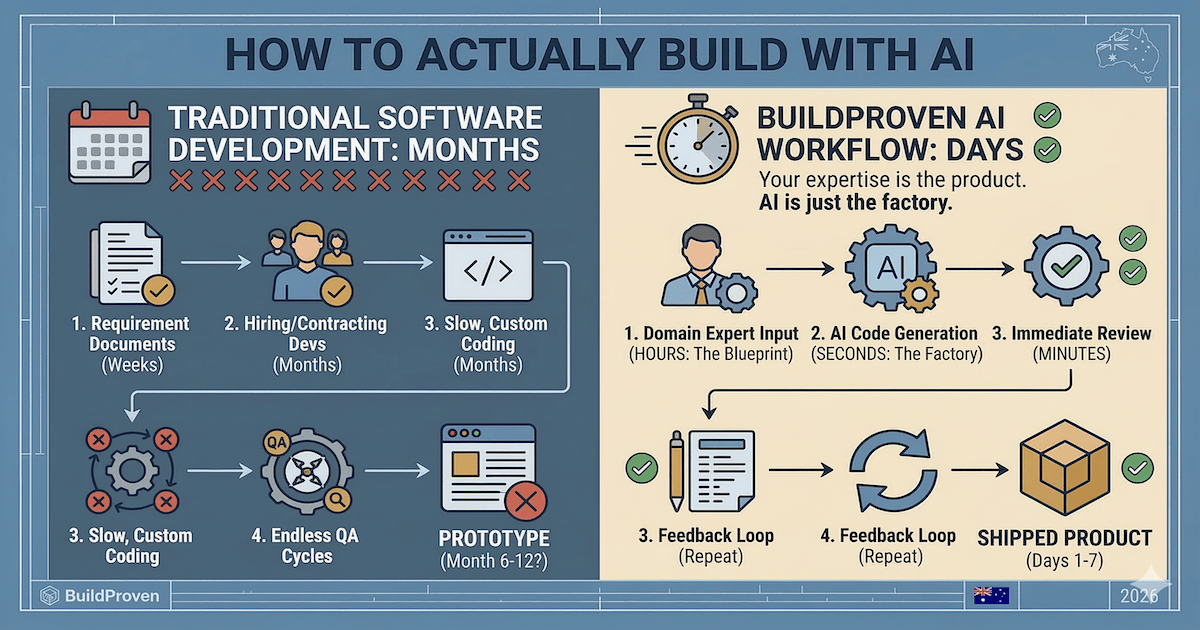

I built or managed software the traditional way for 20+ years. Requirements gathering, architecture docs, sprint planning, code reviews, QA cycles, staging environments, deployment pipelines. A feature that takes an afternoon to describe takes months to ship.

But if you are one person building a product from your domain expertise, most of that process is designed to solve problems you do not have.

You do not have a team of twelve who need to stay synchronized. You do not have a compliance department reviewing your architecture decisions. You do not have a QA team with a three-week backlog.

You have an idea, twenty years of knowing exactly what the product should do, and AI tools that can write code from plain English.

The workflow that works

After testing this across multiple builds and reading what experienced developers like Addy Osmani (Google) are doing, there is a clear pattern emerging. It is not complicated. Five steps.

Step 1: Write a one-page spec first.

Not a twenty-page requirements document. One page. What does the tool do? Who is it for? What are the three core features? What does success look like?

This is where your domain expertise is the advantage and of course, you can use AI to help write the spec by prompting with your basic ideas.

Step 2: Break it into small pieces.

Do not prompt the AI with "build me a complete project management tool." That is how you get a mess.

Instead: "Build me a form that captures a client name, project type, and deadline. Save it to a database. Show a confirmation screen."

One feature. One prompt. Test it. Move to the next piece.

Addy Osmani calls this "waterfall in 15 minutes" - you do the planning phase fast because you already know the domain, then execute in small, testable chunks.

Step 3: Test every piece before moving on.

Treat AI-generated code like it came from a new hire. It is probably 80% right. The 20% that is wrong will bite you later if you do not catch it now.

Click every button. Fill in every form. Try to break it. Fix the issues before adding the next feature. Of course, also tell the AI to create a test suite that’s run automatically.

Step 4: Keep a running document of what you have built.

Keep telling AI to ‘remember’ key things that work and don’t. Do this and the coding will get better as you go.

This sounds basic. It is the single biggest difference between people who ship and people who start over every session because the AI "forgot" what they were building.

Step 5: Ship ugly, then polish.

Get the core working first. No styling, no animations, no edge cases. Just the thing that proves the idea works.

Then go back and make it look professional. UI and AI is a bit tricky and for another edition. You want to run as many tests and security checks as possible here too, if not earlier.

The old way vs the new way

Traditional approach to building a client intake tool:

Requirements gathering (2 weeks). Architecture design (1 week). Development sprint (3 weeks). QA and testing (2 weeks). Deployment and fixes (1 week). Total: about 9 weeks if nothing goes wrong.

AI-assisted approach to the same tool:

One-page spec (30 minutes). Build form and database (1 hour). Build dashboard view (1 hour). Test and fix (2 hours). Style and polish (1 hour). Deploy (30 minutes). Total: about 6 hours across a weekend.

Where people get it wrong

The two failure modes I see most:

No spec, just vibes. You open Cursor or Bolt.new and start prompting without knowing what you are building. The AI happily builds you something - but it is not what you needed, and now you are debugging code you did not write and do not understand.

Trying to build everything at once. One massive prompt. The AI generates 2,000 lines of code. Half of it works. You cannot tell which half. Start over.

Your Turn

Build a meeting cost calculator this week.

Prompt 1:

Build a single-page web app called "Meeting Cost Calculator". It needs a form with these fields:

- Number of attendees (number input, default 6)

- Average hourly salary (number input, default 75)

- Meeting duration in minutes (dropdown: 15, 30, 45, 60, 90)

- A "Calculate" button

When clicked, show the total cost of the meeting in large bold text. Format as currency.Prompt 2:

Add a second section below the result that shows:

Add a second section below the result that shows:

- Cost per minute of this meeting

- "What else could this money buy?" with 3 fun comparisons (e.g., "That's 47 coffees" or "That's 2 months of Netflix")

- Make the comparisons relevant and slightly funnyPrompt 3:

Make it look professional. Clean white background, one accent colour, mobile-friendly. Add a subtle animation when the result appears.

Three prompts. Takes about ten minutes. You will have a working tool you can actually show people at your next meeting.

Weekly build logs from a 25-year program manager who codes with AI.

— Brett

👉 Hit “Reply” and share your experience — I read every one!